Based on a submission by Kevin Ashley about his NSF-funded project to teach writing and argumentation with AI-supported diagramming and peer review.

Argumentation is central to reasoning in many domains: law, natural science, policy. Students normally have unjustified positions, or include irrelevant information as evidence. ArgumentPeer helps students learn how to build strong arguments; it has been deployed in professional, graduate settings (law), and undergraduate settings (research methods).

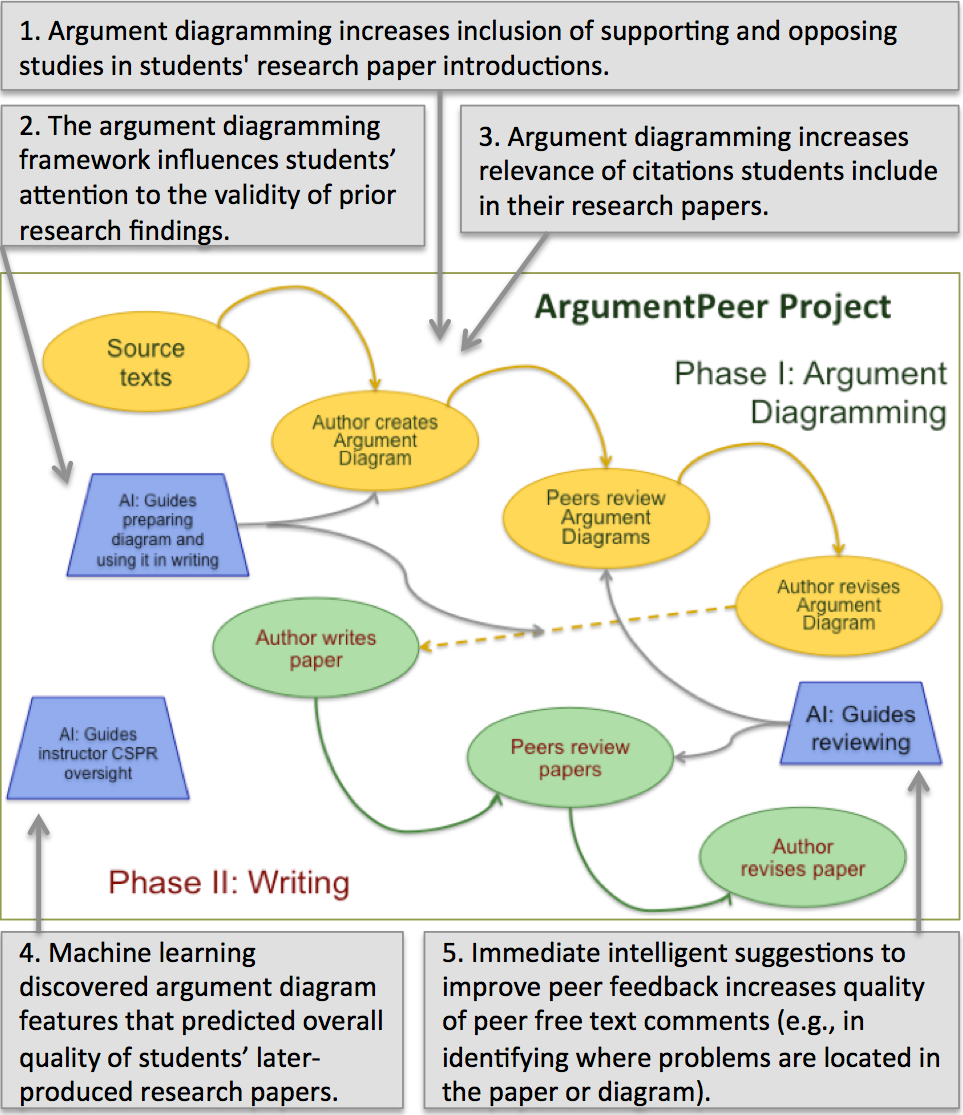

In the ArgumentPeer project, students plan written arguments by diagramming them with automated feedback. They review each other’s argument diagrams based on key criteria; machine learning helps reviewers make critiques more localized and actionable. The system transforms diagrams into copy-friendly texts, students write their arguments, and the peer-review cycle begins anew.

The ArgumentPeer process includes two main phases: I. Argument Planning, and II. Argument Writing. Fig. 1 shows an overview of the process and its underlying components and steps. In Phase I, students examine background readings and then diagram an argument for a research study they will conduct (or legal brief in a law class). An intelligent help system in the diagramming tool guides them. Then students submit their argument diagrams to a web-based system for peer-review and revision (SWoRD). Students use a detailed rubric to evaluate their peers’ diagrams. After receiving the reviews, authors revise their argument diagrams, and then can export their diagram content into an automatically created paper outline.

NSF Project Information

Title: DIP: Teaching Writing and Argumentation with AI-Supported Diagramming and Peer Review

Award Details

PIs: Kevin Ashley, Diane Litman, Christian Schunn

In phase II, students write their paper first drafts using these outlines and submit the drafts to SWoRD for peer review. Finally, the author submits the second draft to SWoRD and the final drafts can either be graded by peers or by the instructor. Across both phases, three key features of SWoRD are: 1) Instructors can easily define rubrics to guide peer reviewers in rating and commenting upon authors’ work. 2) SWoRD provides pressure for high quality reviews such as automatically determining the accuracy of each reviewer’s numerical ratings using a measure of consistency against the mean judgment of other peers for the same papers. 3) A natural language processing component automatically evaluates the peer review comments to make sure the comments are useful (e.g., specify problem locations and possible solutions).