In addition to original primers, CIRCL occasionally features relevant primers found in the literature. We welcome new primers on similar topics, but written more specifically to address the needs of the cyberlearning community. Have a primer to recommend? Contact CIRCL.

Title: A Primer on Neural Network Models for Natural Language Processing

Author: Yoav Goldberg, Bar-Ilan University, Israel

Abstract

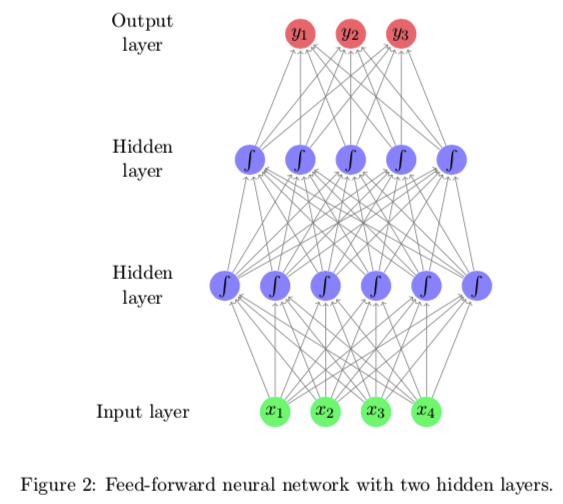

Over the past few years, neural networks have re-emerged as powerful machine-learning models, yielding state-of-the-art results in fields such as image recognition and speech processing. More recently, neural network models started to be applied also to textual natural language signals, again with very promising results. This tutorial surveys neural network models from the perspective of natural language processing research, in an attempt to bring natural-language researchers up to speed with the neural techniques. The tutorial covers input encoding for natural language tasks, feed-forward networks, convolutional networks, recurrent networks and recursive networks, as well as the computation graph abstraction for automatic gradient computation.

Citation

Goldberg, Y. (2016). A primer on neural network models for natural language processing. Journal of Artificial Intelligence Research, 57, 345-420.